API company Kong is launching its open source AI Gateway, today, an extension of its existing API gateway that allows developers and operations teams to integrate their applications with one or more large language models (LLMs) and access them through a single API. On top of this, Kong is launching several AI-specific features prompt engineering, credential management and more.

“We think that AI is an additional use case for APIs,” Kong co-founder and CTO Marco Palladino told me. “APIs are driven by use cases: mobile, microservices — AI just happens to be the latest one. When we look at AI, we’re really looking at APIs all over the place. We’re looking at APIs when it comes to consuming AI, we’re looking at APIs to fine-tune the AI, and AI itself. […] So the more AI, the more API usage we’re going to have in the world.”

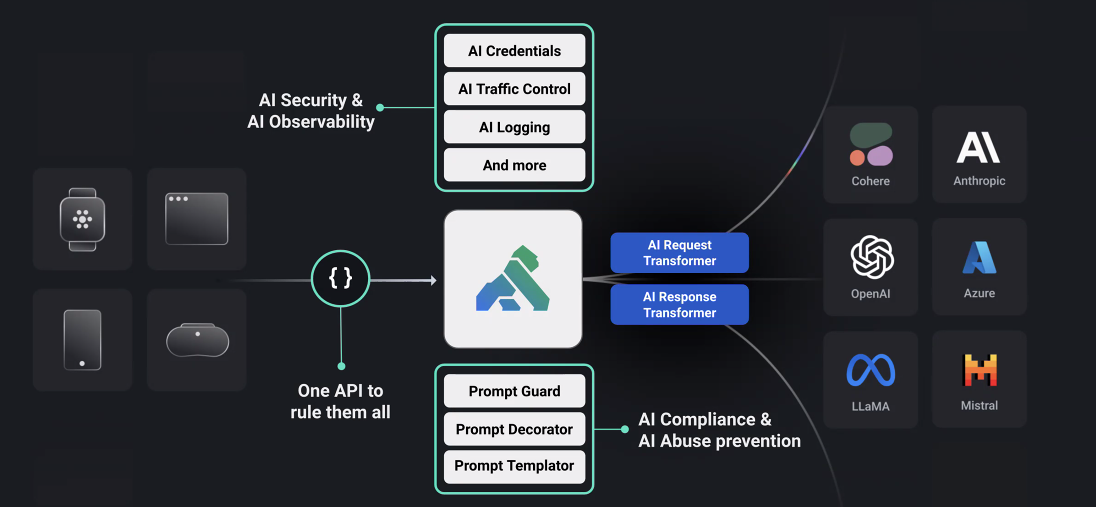

Palladino argues that while virtually every organization is looking at how to use AI, they are also afraid of leaking data. Eventually, he believes, these companies will want to run their models locally and maybe use the cloud as a fallback. For the time being, though, these companies also need to figure out how to manage the credentials for accessing cloud-based models, control and log traffic, manage quotas, etc.

“With our API gateway, we wanted to provide an offering that allows developers to be more productive when building on AI by being able to leverage our gateway to consume one or more LLM providers without having to change their code,” he noted. The gateway currently supports Anthropic, Azure, Cohere, Meta’s LLaMA models, Mistral and OpenAI.

The Kong team argues that most other API providers currently manage AI APIs in the same way as any other APIs. Yet by layering these additional AI-specific features on top of the API, the Kong team believes, it can enable new use cases (or at least make existing ones easier to implement). Using the AI response and request transformers, which are part of the new AI Gateway, developers can change prompts and their results on the fly to automatically translate them or remove personally identifiable information, for example.

Prompt engineering, too, is deeply integrated into the gateway, enabling businesses to enforce their guidelines on top of these models. This also means that there is a central point for managing these guidelines and prompts.

It’s been almost nine years since the launch of the Kong API Management Platform. At the time, the company that is now Kong was still known as Mashape, but as Mashape/Kong co-founder and CEO Augusto Marietti told me in an interview earlier this week, this was almost a bit of a last-ditch effort. “Mashape was going nowhere and then Kong is the number one API product on GitHub,” he said. Marietti noted that Kong was cash-flow positive in the last quarter — and isn’t currently looking for funding — so that worked out quite well.

Now, the Kong Gateway is at the core of the company’s platform and also what powers the new AI Gateway. Indeed, current Kong users only have to upgrade their current install to get access to all of the new AI features.

As of now, these new AI features are available for free. Over time, Kong expects to launch paid premium features as well, but the team stressed that this is not the goal for this current release.

techcrunch.com